Auto-Populated Drafts Feel Efficient — Until They Scale the Wrong Mistakes

Auto-populating proposal answers—whether from a content library or generative AI—can look like a clear productivity win: produce a complete draft instantly, then ask someone to “review and approve.” But cognitive science and human–AI research suggest a consistent risk: once content looks finished, humans are more likely to miss visible problems and over-trust what the system produced.

This isn’t an argument against AI. It’s an argument for workflow design that reflects how attention and trust actually work in real proposal review tasks.

This article draws on the same research and real-world proposal workflows discussed in our recent webinar, Why AI Proposal Automation Increases Risk — and What Best Practices Get Right, which examines how attention limits, automation bias, and workflow design interact in high-stakes proposal environments—and how teams can reduce risk without sacrificing efficiency.

Key takeaway: Auto-populating proposal content increases risk when it encourages passive review. Research shows humans miss visible errors and over-trust fluent automated outputs. Safer proposal automation requires deliberate choice, verification, and accountability.

1. Proofreading Isn’t a Reliable Safety Net in Auto-Populated Proposals

One of the strongest foundations comes from Jeremy Wolfe and colleagues, who describe “Looked But Failed to See” (LBFTS) errors — sometimes described as “normal blindness.” These are failures to notice clearly visible information, and they occur across a wide range of tasks.

Crucially, Wolfe explicitly uses proofreading as an everyday example. You can look directly at a typo and still miss it because the brain is a prediction engine — it fills in what it expects to see rather than carefully re-processing every element (Wolfe et al., 2022).

What that means for proposals

When a proposal answer is already inserted, formatted, and fluent, reviewers often shift into a “looks done” mode. At that point, review becomes more like confirmation (“does this generally sound right?”) than verification (“does this precisely meet the requirement, and is every claim accurate?”). The research suggests the latter is exactly what humans are prone to skip when proofreading finished text.

Takeaway: If your quality control depends on “they’ll catch it in proofreading,” you’re betting against how attention works.

2. Automation Bias: Suggested Answers Are “Sticky”

A second well-established effect is automation bias (also discussed as overreliance): when systems provide recommendations, humans tend to accept them too readily — particularly when verifying the output is tedious, time-pressured, or cognitively demanding.

A 2025 systematic review of automation bias in human–AI collaboration highlights that overreliance is a real concern in high-stakes environments and that user engagement and verification demands are among the most feasible and impactful levers to mitigate it.

The same review also warns that explanations alone don’t reliably improve decision accuracy and can sometimes reinforce misplaced trust if they increase cognitive burden or don’t match the user’s expertise.

What that means for proposals

Auto-population can unintentionally signal: “this is the answer.” And that “default correctness” framing can make it harder for users to challenge relevance, recency, compliance, and fit — especially when answers sound confident and polished.

Takeaway: When the system “answers for you,” people are more likely to rubber-stamp than re-evaluate.

3. Automation Bias Makes Auto-Populated Proposal Answers Hard to Challenge

Organizations often respond to these risks by demanding “more careful review.” But the research suggests that passive proofing is the weak link — so adding more passive proofing often just adds time and fatigue, not reliability.

A 2025 systematic review of automation bias in human–AI collaboration found that overreliance is a persistent concern in high-stakes environments. The review also noted that user engagement and verification demands are among the most feasible and effective ways to reduce this risk (Romeo & Conti, 2025).

MIT Sloan Management Review reported a field experiment with professionals using LLM-generated text where a tool highlighted potential errors and omissions — adding a layer of “beneficial friction.” The result: higher accuracy without significantly increasing time to complete the task.

MIT Sloan also defines beneficial friction as intentional cognitive and procedural “speed bumps” added to improve responsible and successful use of generative AI.

What this means for proposals

Auto-population can unintentionally signal: “this is the answer.” That framing makes it harder for users to challenge relevance, recency, compliance, and customer fit—especially when the content sounds confident and polished.

Takeaway: The right “speed bumps” reduce uncritical adoption and improve outcomes.

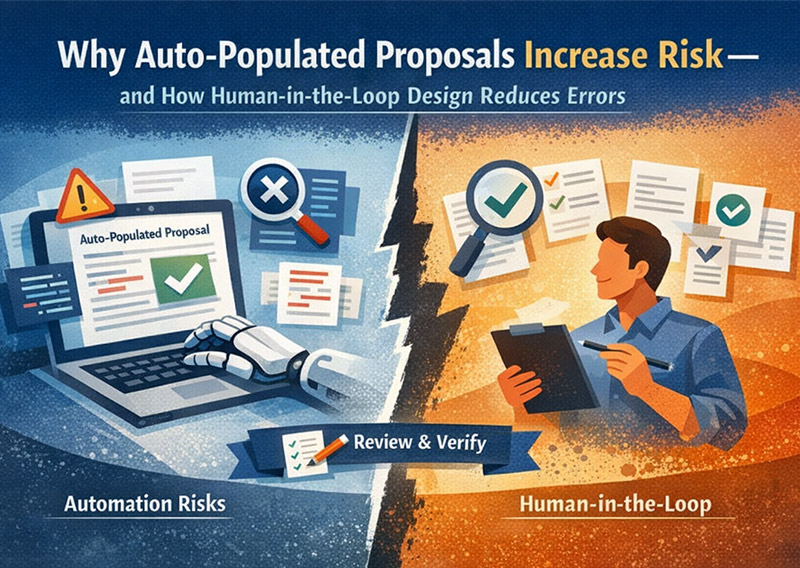

4. Risky vs. Safer Proposal Automation Patterns

Putting this together, the safer and more scalable approach is not “no automation.” It’s automation that recommends and supports judgment rather than silently deciding.

Here’s the difference in practice:

Risky Proposal Automation Pattern

Auto-populate → skim → submit

- Encourages passive review

- Triggers normal blindness during proofreading

- Increases overreliance on what the system inserted

Safer Human-in-the-Loop Proposal Workflow

Recommend → preview → choose → insert → verify

- Requires micro-decisions that keep attention engaged

- Creates accountability (“I chose this answer”)

- Adds targeted friction at the right points (claims, compliance, customer specifics)

This is the heart of “human-in-the-loop” that actually works: humans are not the last checkbox; they are the decision-makers throughout.

A Practical Design Checklist (Use This to Evaluate Any Proposal Tool)

If you’re assessing proposal automation, ask whether the system:

- Treats AI as recommendation, not auto-answer.

- Supports preview before commit (evaluate fit before insertion).

- Builds in beneficial friction at high-risk points.

- Prompts verification for factual claims and requirements mapping.

- Keeps accountability clear: the author owns the final response.

The Expedience Approach to Safer Proposal Automation

If proposal accuracy, compliance, and defensibility matter, the goal isn’t to eliminate humans — it’s to design the system around real human attention limits, and to add targeted “speed bumps” where verification matters most.

Whether content comes from a library or AI, auto-populating answers encourages passive review and over-trust. Our workflow uses recommendations and deliberate choices to reduce missed errors and improve quality.

Sources

- Wolfe et al., “Normal blindness: when we Look But Fail To See” (Trends in Cognitive Sciences, 2022) [cell.com]

- Romeo & Conti, “Exploring automation bias in human–AI collaboration” (AI & Society, published online July 3, 2025) [link.springer.com]

- Gosline et al., “Nudge Users to Catch Generative AI Errors” (MIT Sloan Management Review, May 29, 2024) [sloanreview.mit.edu]

- MIT Sloan “What is beneficial friction?” definition [mitsloan.mit.edu]

Transform Business Proposals

More than speed, winning proposals demand accuracy and control. Expedience delivers all three directly within Microsoft Word.

Book a demo to see how!